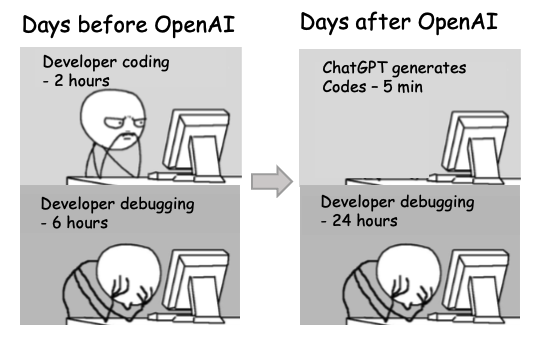

Programming is hard, or at least it used to be. AI code generators like Amazon’s CodeWhisperer, DeepMind’s AlphaCode, GitHub’s CoPilot, Replit’s Ghostwriter and many others now make programming easier, at least for some people, some of the time. What opportunities and challenges do these new tools present for educators? Join us on Zoom to discuss an award winning paper by Brett Becker, Paul Denny, James Finnie-Ansley, Andrew Luxton-Reilly, James Prather and Eddie Antonio Santos at University College Dublin, the University of Auckland and Abilene Christian University on this very topic. [1] We’ll be joined by two of the co-authors who will present a lightning talk to kick-off our discussion, for our monthly ACM journal club meetup. Here’s the abstract of his paper:

The introductory programming sequence has been the focus of much research in computing education. The recent advent of several viable and freely-available AI-driven code generation tools present several immediate opportunities and challenges in this domain. In this position paper we argue that the community needs to act quickly in deciding what possible opportunities can and should be leveraged and how, while also working on overcoming otherwise mitigating the possible challenges. Assuming that the effectiveness and proliferation of these tools will continue to progress rapidly, without quick, deliberate, and concerted efforts, educators will lose advantage in helping shape what opportunities come to be, and what challenges will endure. With this paper we aim to seed this discussion within the computing education community.

All welcome, as usual we’ll be meeting on zoom at 2pm BST (UTC+1), details at sigcse.cs.manchester.ac.uk/join-us. Thanks to Sue Sentance at the University of Cambridge for nominating this paper for discussion.

See also linkedin.com/posts/duncanhull_ai-codewhisperer-alphacode-activity-7051921278923915264-7i_5

References

- Brett A. Becker, Paul Denny, James Finnie-Ansley, Andrew Luxton-Reilly, James Prather, Eddie Antonio Santos (2023) Programming Is Hard – Or at Least It Used to Be: Educational Opportunities and Challenges of AI Code Generation in Proceedings of the 54th ACM Technical Symposium on Computer Science Education: SIGCSE 2023, pages 500–506, DOI: 10.1145/3545945.3569759